Cost-Based Hybrid Query Optimization in MotherDuck

2024/11/04

By

Jeewon Heo, Peter Boncz (supervisor), Boaz Leskes (daily supervisor, MotherDuck), Stefan Manegold (2nd reader)

Overview

When MotherDuck runs a query, it has to make a fundamental decision: which parts should execute on your laptop, and which parts should run in the cloud? Get it right, and you save time and bandwidth. Get it wrong, and you're shuffling data across the network for no good reason.

MotherDuck's original optimizer used a simple heuristic — always move the "smaller" side of a join to wherever the larger side lives. It's a reasonable rule of thumb, but it's greedy: it makes locally optimal choices that don't always add up to a globally optimal plan. And the heuristic for estimating which side is "smaller" was often wrong.

This thesis replaces that heuristic with a proper cost-based optimizer built on dynamic programming. The results speak for themselves: 1.22x average speedup on TPC-H, 2.06x on TPC-DS, and individual long-running queries seeing over 17x improvement.

The Problem

In a hybrid execution system like MotherDuck, every join in a query plan creates a decision point. If one table lives locally and another lives in the cloud, something has to move. The question is what moves where — and those decisions compound across an entire query plan.

The old optimizer walked through the plan bottom-up, greedily picking the cheapest move at each step. But a locally cheap decision can force expensive transfers later. Consider a chain of three joins: moving a small intermediate result to the cloud might look cheap in isolation, but if the next join needs that result back on the client, you've paid for two network round trips instead of zero.

The Solution

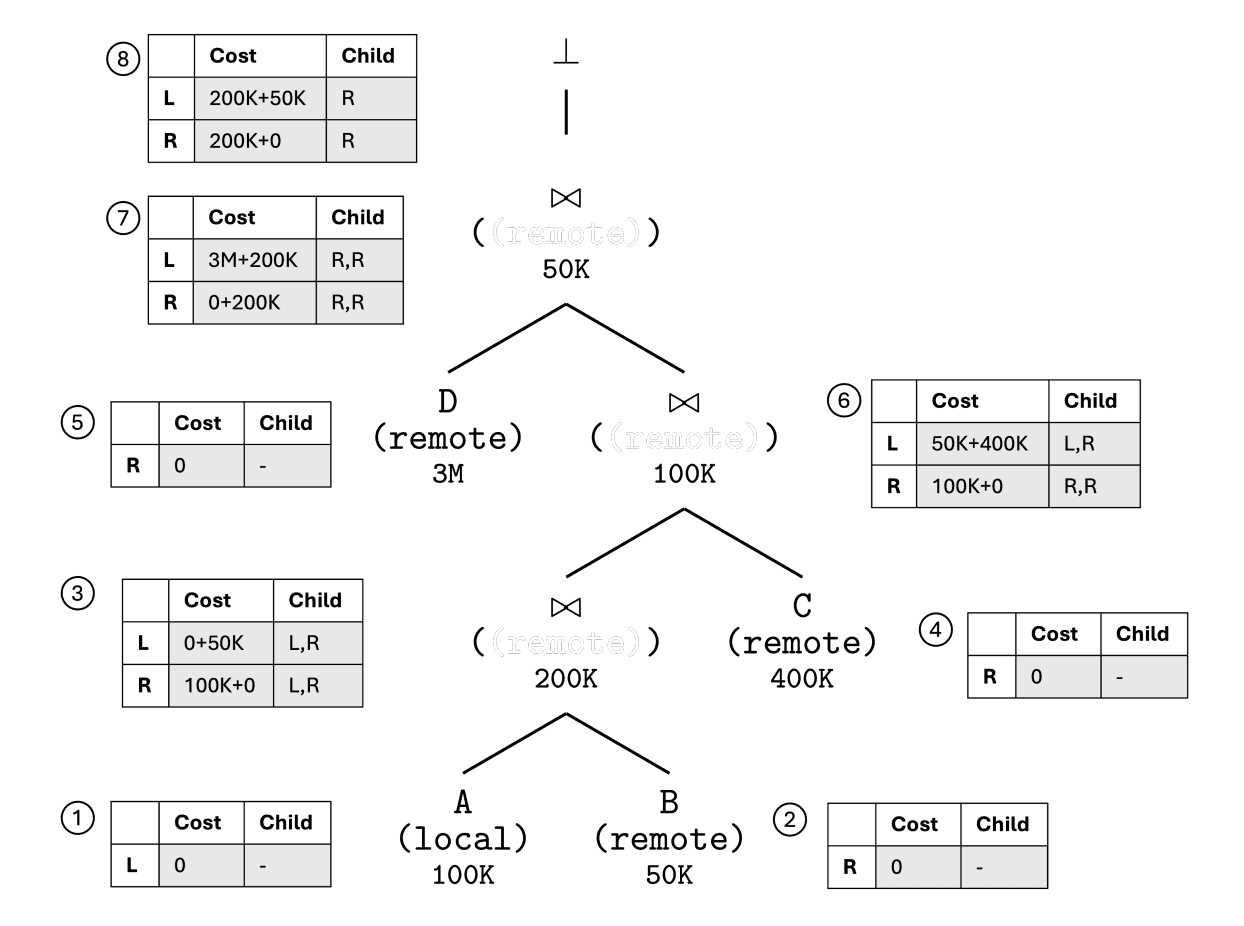

The new optimizer frames site selection as a dynamic programming problem, borrowing a key insight from the classic System-R optimizer: the concept of interesting orders — except here it's interesting sites.

The idea is elegant: when building up partial query plans from the bottom, don't just keep the cheapest plan for each subexpression. Also keep plans where the result ends up at a different site, because that placement might pay off in a later join. A plan that costs slightly more now but leaves its output in the right place can save a network transfer later.

Cost is defined as the estimated amount of data transferred across the network, approximated using cardinality estimates from DuckDB's statistics.

Fixing the Foundation

Along the way, the thesis uncovered and fixed several bugs in how DuckDB and MotherDuck handle cardinality estimates — the statistics that the optimizer depends on to make good decisions:

- Lost cardinality information: DuckDB's join order optimizer would compute good estimates, but subsequent optimization rules (or MotherDuck's plan serialization) would discard them.

- Ignored filter selectivity: When estimating remote table sizes, MotherDuck wasn't accounting for WHERE clause filters, leading to inflated cardinality estimates and potentially wrong join orders.

These fixes are now merged into both the MotherDuck and DuckDB codebases.

Results

The evaluation compares the heuristic optimizer against the cost-based optimizer across three standard benchmarks:

| Benchmark | Average Speedup | Best Individual Speedup |

|---|---|---|

| TPC-H | 1.22x | — |

| TPC-DS | 2.06x | 17x |

| JOB (Join Order Benchmark) | 1.01–1.13x | 38–50x |

The pattern is clear: long-running queries benefit the most. These are queries with complex join graphs where site placement decisions compound — exactly where a global optimization strategy should shine.

Short-running queries sometimes see slight slowdowns. The culprit is usually network latency: when a query only takes a few hundred milliseconds, the overhead of an extra bridge operator (even if it transfers less data) can dominate.

Latency and Beyond

The thesis also runs preliminary experiments with a cost model that accounts for network latency as a constant per-bridge cost. This helps significantly for JOB queries (average speedup jumps to 1.13x, with some queries hitting 50x), suggesting that a richer cost model — one that weighs latency, bandwidth, and compute — could push the optimizer even further.

The DP framework is designed to be extensible: new cost factors can be added as terms in the cost function without restructuring the algorithm.

About

This is a Master's thesis from the joint UvA-VU Master of Science program in Computer Science, completed in November 2024. The research was conducted in collaboration with MotherDuck, with Boaz Leskes (MotherDuck) as daily supervisor and Peter Boncz (CWI/VU) as primary supervisor.

Get the full document