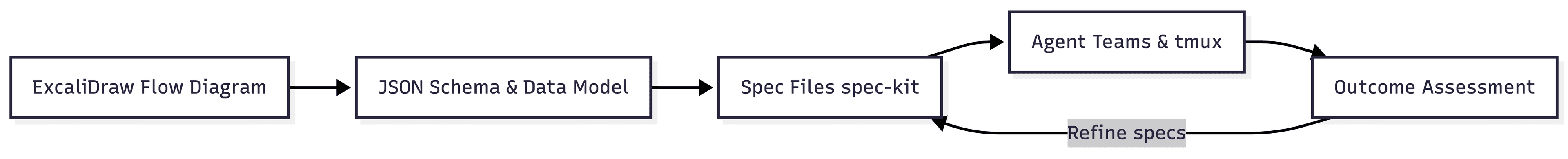

Over the years, Mark has changed his workflow. In this part, he shows how he uses agentic agents with tmux and how he reviews and checks the outcome.

Agent Parallelization and Executing Them: Teams and Tmux

After all the specs and focusing on them once, he uses agents to implement the specs and Claude uses the feature called Agent Teams (which can be activated in Claude settings.json with CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS).

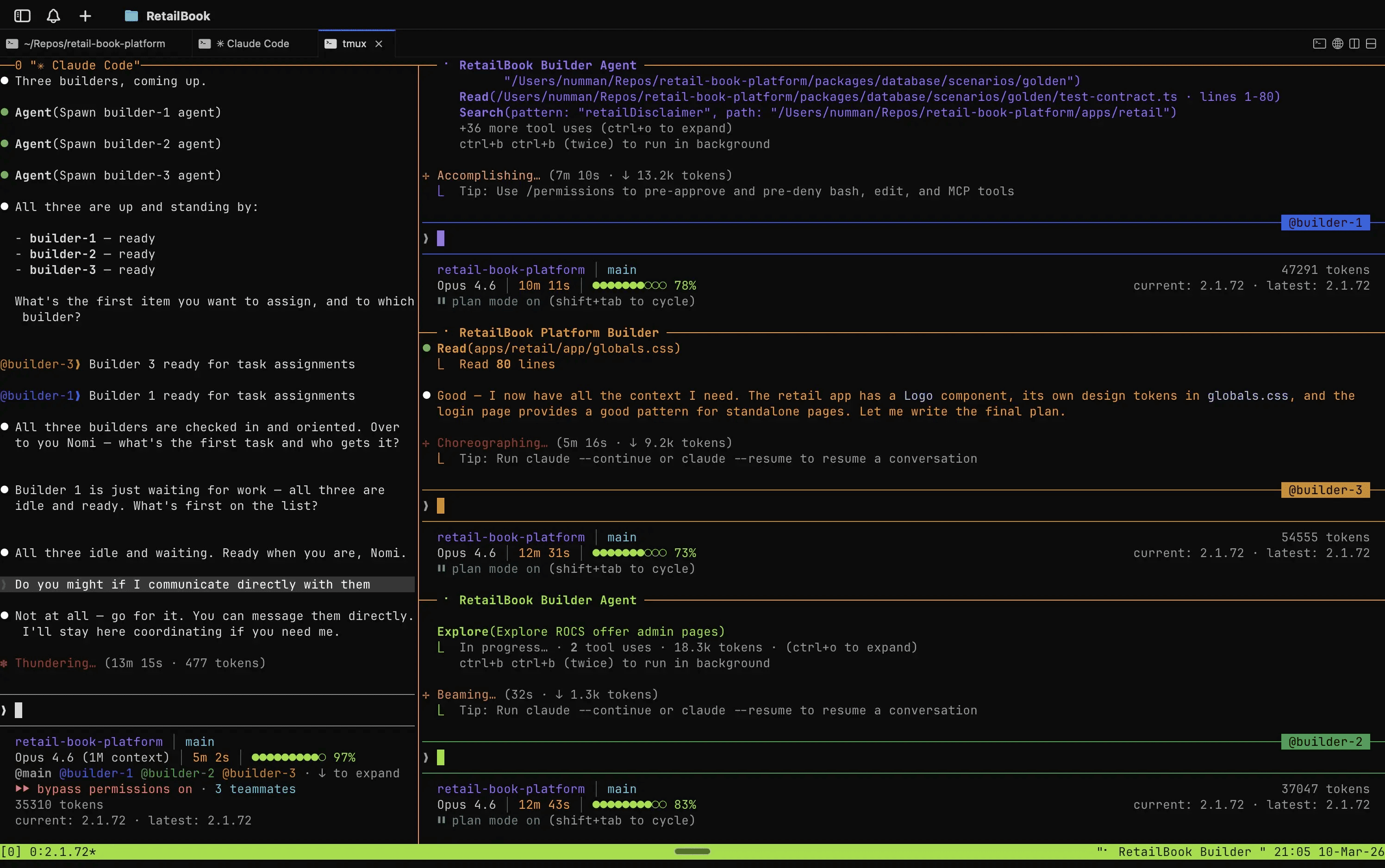

The cool thing about agent teams is that they let you coordinate multiple Claude Code instances working together. One session acts as the team lead, coordinating work, assigning tasks, and synthesizing results. Teammates work independently, each in its own context window, and communicate directly with each other.

Mark spawns multiple agents using iTerm2 and tmux, which I heavily recommend for agent work (also check Zellij for an easier start), and the agent teams feature will automatically open the additional terminals in separate panes:

Example from X

It shows Claude self-orchestrating his own team. Think of it as similar to Gastown, Agor, and other AI orchestrators, but integrated into Claude.

Mark's workflow with agent teams is deliberately outcome-focused rather than code-focused. Once the agents complete their run, he checks the result against the original specs and JSON schemas, not the code itself. The only thing that matters is whether the outcome does what was defined.

Is Reviewing Code Still Needed?

The tough question was whether Mark still reviews code, especially when Claude can generate more of it in a minute than we can ever review. Mark said: "Not locally or on unimportant projects where I'm exploring the limits and potential traps of these powerful tools."

But for production pipelines or when customers asked him specifically for his opinion, he said:

Along with the wider industry, we are figuring out how to use AI safely at scale.

Also at work when they have mission-critical services such as in a bank, you can't just vibe code something. It comes down to use-cases, he said.

Besides use cases, he tried different ways of reviewing. First he tried a sophisticated process where the above agents would create PRs and he would then comment on these with improvements and changes. The agents would then read them and integrate the given feedback and continue the process. But even that workflow made him too much of a bottleneck. It wasn't scalable enough.

Mark searched for other ways to work with it.

Outcome-Driven Reviews: And Starting from Scratch Again

What he does now is assess outcomes instead. After all the rigorous time in speccing, he tests the result by running the pipeline, creating tests, or checking the code manually the old-fashioned way.

The key mindset shift here is that the first build is deliberately treated as throwaway. It's requirements exploring via building. You implement the spec once, learn what you got wrong, and expect to discard it.

That's why he tests the outcome. And once tested, he might have gotten new learnings that he could have only gotten through implementing or with actual tests. That's when he will feed these learnings back to the specs and update initial requirements, and start all over again, from scratch, letting the agent create a new outcome based on the updated specs. The cycle is: spec → build → assess → improve spec/assumptions → repeat.

This way, he has an approach with a very deep and exact iteration, almost deterministic, where he can re-run the agents with updated feedback and requirements, and get the same or similar outcome with the added updates, because of the spec-driven approach and the structured approach that spec-kit delivers, and the dedicated way he defines his requirements, which won't just be hallucinated as different inputs, end-to-end.

Though this can always happen, this approach served him very well, with a high-quality output he can trust, and a qualitative way to approach a complex problem with the help of agents.

If the outcome meets the quality he expected and it does what he wants, he goes to internal stakeholders to get feedback from them. And then the same process again, updating specs, fixing requirements errors or possible wrong assumptions, and off the agents go again.

Tests and Quality Gates

Tests and QA he writes manually. This is another way to make sure the outcome meets his expectations. Most important is the value, he says:

Value first, then outcome and then worry about other things

If it's not turning out to be valuable to the stakeholders, he wants to avoid spending more time. That's why the agent iterations and building something "quickly", with rigorous specs and definitions in place, worked well for him so far.

Senior vs. Junior: Working with AI

We move on to an interesting discussion of whether AI helps senior engineers or juniors more. Mark says (he also wrote about it) that AI helps more senior engineers, as seniors "understand the trade-offs of tech debt".

He says further that in AI iterations, we move much faster, generating legacy code and architecture constructs in days and weeks, instead of years. If Mark iterates with the spec-driven design explained above, there are multiple different architectures generated, some of which might have been bad from the very beginning.

As a senior, he thinks that we can give the right guidance from the very beginning and exclude bad outcomes and early "legacy code". No doubt, there will be code and architecture to be adapted, too, but if you lack experience, you basically have no chance of knowing.

Framework and Architectures Are for the Experienced

Mark mentions that at Gable, he is building something from scratch. Let's say we are at iteration v4: deep technical architectures are coming up, to choose an Apache Kafka infrastructure, define your schema in JSON or Avro, or use Parquet.

These decisions can only be made with experience. Sure, agents will give you a good middle ground, and with research they will potentially choose the right solution for the current problem. But how do you know what's the best solution for your given business problem? If you have built multiple data platforms and have seen many companies, you just know some of these things or developed an intuition for what's needed.

In combination with the agents, it's just a much better tool for seniors than for juniors who need to more or less blindly trust the assessments the agents made. The quality of outcome depends on frameworks and architectural choices, accumulating legacy code early if a big architectural component is chosen wrong.

In a related but further way, the knowledge is like a linter in an editor that knows things ahead of runtime. It can detect wrong choices directly.